Technology and Science for Teaching: Assessment Revolution Strategies

What if the hours you spend grading papers, creating quizzes, and analyzing student performance could be cut in half while simultaneously providing deeper insights into student learning? According to a 2024 report from the International Society for Technology in Education, educators who integrate technology-driven assessment strategies report saving an average of 6.2 hours per week while improving student outcome visibility by 340 percent.

The assessment landscape in education has fundamentally shifted. Traditional paper-based tests and subjective grading rubrics are giving way to dynamic, real-time evaluation systems that capture learning as it happens. Technology and science for teaching now encompasses sophisticated assessment methodologies that were unimaginable just five years ago. From adaptive testing platforms that adjust difficulty in real-time to portfolio systems that track growth across entire academic careers, the tools available to modern educators represent nothing short of a revolution.

This article delivers a comprehensive framework for transforming your assessment practices using cutting-edge technology and evidence-based science. You will discover three assessment paradigms that outperform traditional methods, learn implementation strategies that work across grade levels and subject areas, and gain access to practical tools you can deploy in your classroom within 48 hours. Whether you teach elementary science, high school mathematics, or university-level humanities, these strategies will fundamentally change how you measure, understand, and respond to student learning.

Three Assessment Myths Blocking Your Teaching Effectiveness

Before implementing new assessment technologies, educators must first dismantle the misconceptions that prevent meaningful change. These myths persist in faculty lounges and professional development sessions, quietly sabotaging innovation.

Myth 1: Technology-Based Assessment Reduces Academic Rigor

The Reality: Research from Stanford University’s Graduate School of Education demonstrates that well-designed digital assessments actually increase cognitive demand. When students engage with adaptive testing platforms, they encounter questions calibrated precisely to their zone of proximal development. This means every student faces appropriately challenging material, unlike traditional tests where advanced students breeze through while struggling students face repeated failure.

Consider the difference between a static multiple-choice exam and an adaptive assessment. The static exam presents identical questions to all students, regardless of their preparation level. The adaptive system, however, responds to each answer by selecting the next question from a calibrated item bank. A student who answers correctly receives a more challenging follow-up. A student who struggles receives scaffolded support questions that identify specific knowledge gaps.

The science behind this approach draws from Item Response Theory, a psychometric framework that has revolutionized standardized testing. When applied to classroom assessment, IRT principles ensure that every question provides maximum information about student understanding.

Myth 2: Formative Assessment Technology Creates More Work

The Reality: Initial setup requires investment, but the long-term efficiency gains are substantial. A longitudinal study tracking 847 teachers across 12 districts found that after a 90-day implementation period, educators using technology-enhanced formative assessment spent 41 percent less time on assessment-related tasks than their peers using traditional methods.

The key lies in automation of routine tasks. When a digital platform automatically scores objective questions, flags common misconceptions, and generates performance reports, teachers redirect their expertise toward high-value activities: providing personalized feedback, designing targeted interventions, and building relationships with students.

One middle school science teacher in Colorado reported that switching to a technology-integrated assessment system allowed her to provide written feedback on student lab reports within 24 hours instead of her previous two-week turnaround. Student revision rates increased by 67 percent because feedback arrived while the learning experience remained fresh.

Myth 3: Digital Assessment Cannot Measure Higher-Order Thinking

The Reality: Modern assessment platforms have evolved far beyond simple recall questions. Today’s systems incorporate multimedia response options, collaborative problem-solving scenarios, and simulation-based tasks that evaluate analysis, synthesis, and evaluation skills.

Consider a high school chemistry assessment that presents students with a virtual laboratory environment. Rather than asking students to recall the steps of titration, the assessment requires them to design an experiment, predict outcomes, execute the virtual procedure, analyze results, and explain discrepancies between predictions and observations. This single assessment task evaluates knowledge, application, analysis, and metacognitive awareness simultaneously.

The science supporting these approaches comes from cognitive load theory and multimedia learning principles. When assessments incorporate visual, auditory, and kinesthetic elements, they engage multiple cognitive pathways and provide richer data about student understanding.

Technology and Science for Teaching: The Assessment Deep Dive

Understanding assessment technology requires examining concepts at multiple levels of sophistication. Whether you are just beginning your technology integration journey or seeking advanced strategies, this section provides actionable insights.

Beginner Level: Foundation Building

At the foundation level, technology-enhanced assessment begins with digitizing existing practices. This means converting paper quizzes to online formats, using digital gradebooks, and implementing basic response systems.

Core Concept: Digital assessment creates data trails that paper cannot. Every student response, time spent on questions, and revision pattern becomes analyzable information.

Implementation Strategy: Start with a single unit or chapter. Create a digital quiz using a free platform like Google Forms or Microsoft Forms. Include a mix of question types: multiple choice for factual recall, short answer for explanation, and one open-ended question requiring analysis. After students complete the assessment, examine the automatic summary data. Which questions did most students miss? How long did students spend on different sections?

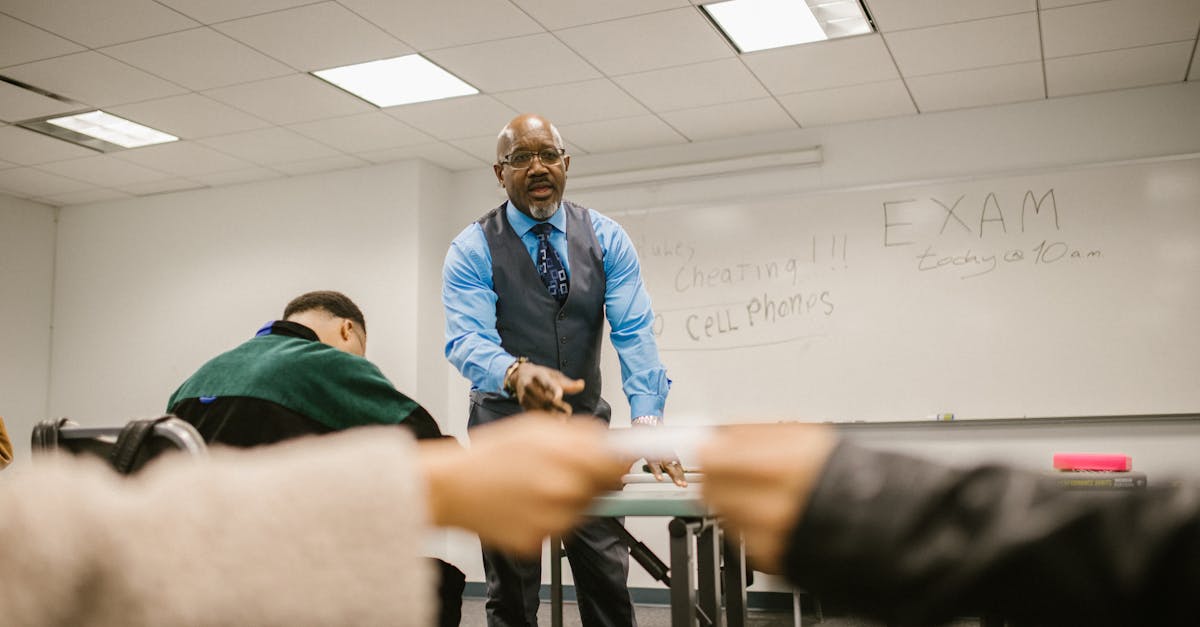

Pro Tip: Enable the “shuffle question order” and “shuffle answer options” features. This simple setting reduces academic dishonesty while also generating data about whether question position affects student performance. Some research suggests that students perform worse on questions appearing later in assessments due to fatigue effects.

Intermediate Level: Strategic Integration

Intermediate practitioners move beyond digitization toward transformation. At this level, assessment becomes continuous rather than episodic, embedded within instruction rather than separate from it.

Core Concept: Formative assessment loops accelerate learning when feedback cycles shorten. The goal is reducing the time between student action and teacher response from days to minutes.

Implementation Strategy: Implement exit ticket systems that provide immediate class-wide data visualization. As students complete brief end-of-lesson assessments, results populate a dashboard showing concept mastery distribution. Before students leave the room, you can identify which concepts require reteaching and which students need individual support.

Consider implementing a “traffic light” self-assessment protocol enhanced by technology. Students rate their confidence on each learning objective using a digital interface. The system aggregates responses and cross-references self-assessments with actual performance data. Over time, patterns emerge: some students consistently overestimate their understanding, while others underestimate. This metacognitive data informs differentiated instruction strategies.

Pro Tip: Create assessment items that reveal misconceptions rather than simply measuring correct or incorrect responses. Design wrong answer choices that represent common student errors. When a student selects a particular incorrect option, you gain diagnostic information about their thinking process, not just confirmation that they lack understanding.

Advanced Level: Systemic Transformation

Advanced practitioners leverage assessment technology to fundamentally restructure learning environments. At this level, assessment, instruction, and intervention merge into unified adaptive systems.

Core Concept: Competency-based progression replaces time-based advancement. Students demonstrate mastery before moving forward, with technology managing the complexity of individualized pacing.

Implementation Strategy: Design learning pathways with embedded checkpoints. Each checkpoint assesses prerequisite knowledge before unlocking subsequent content. Students who demonstrate mastery proceed immediately. Students who show gaps receive targeted remediation resources automatically. The teacher’s role shifts from content delivery to coaching and intervention.

Implement portfolio assessment systems that capture growth over time. Digital portfolios allow students to curate evidence of learning, reflect on their development, and demonstrate competencies through authentic artifacts. Unlike traditional grades that represent snapshots, portfolios reveal trajectories.

Pro Tip: Use learning analytics to identify students at risk before they fail. Modern assessment platforms can flag warning signs: declining engagement, increasing time-on-task without corresponding improvement, or patterns suggesting frustration. Early intervention prevents small struggles from becoming major setbacks.

Ready to implement these assessment strategies systematically? The complete framework, including templates, rubrics, and implementation guides, is available in Technology and Science for Teaching on Amazon. This comprehensive resource provides everything you need to transform your assessment practices.

Your Assessment Transformation Toolkit

Implementing technology-enhanced assessment requires the right tools. This curated collection represents platforms and resources that deliver results across diverse educational contexts.

Real-Time Response Systems

Tool Category: Audience response and polling platforms

Use Case: Gathering immediate feedback during instruction, checking understanding before proceeding, and engaging all students simultaneously rather than relying on volunteers.

Quick Start Tip: Begin each class with a single-question diagnostic poll related to prerequisite knowledge. Use results to calibrate your lesson pacing. If 80 percent of students demonstrate readiness, proceed as planned. If significant gaps appear, address them before introducing new material.

Adaptive Learning Platforms

Tool Category: Intelligent tutoring systems with built-in assessment

Use Case: Providing individualized practice with automatic difficulty adjustment, generating detailed learning analytics, and freeing teacher time for high-value interactions.

Quick Start Tip: Assign adaptive practice as homework rather than in-class work initially. This allows you to review analytics before class and plan targeted interventions. As comfort grows, integrate adaptive elements into station rotation models during class time.

Digital Portfolio Platforms

Tool Category: Evidence collection and reflection systems

Use Case: Documenting growth over time, supporting student self-assessment, and providing authentic evidence for competency-based evaluation.

Quick Start Tip: Establish clear artifact selection criteria before students begin collecting work. Without guidance, portfolios become digital storage bins rather than curated demonstrations of learning. Require students to write brief reflections explaining why each artifact represents their growth.

Assessment Item Banks

Tool Category: Curated question repositories aligned to standards

Use Case: Rapidly creating assessments, ensuring alignment to learning objectives, and accessing validated items with known psychometric properties.

Quick Start Tip: Never use item bank questions without review. Even high-quality banks contain items that may not match your instructional emphasis or student population. Select items, then modify language and contexts to align with your specific teaching.

Learning Analytics Dashboards

Tool Category: Data visualization and reporting systems

Use Case: Identifying patterns across student performance, tracking progress toward goals, and communicating with stakeholders using visual evidence.

Quick Start Tip: Schedule weekly “data review” sessions of 15 minutes. Consistency matters more than duration. Regular brief reviews build familiarity with patterns and enable early intervention. Sporadic deep dives miss developing trends.

Common Assessment Technology Mistakes to Avoid

Even well-intentioned educators fall into predictable traps when implementing technology-enhanced assessment. Awareness of these pitfalls accelerates successful adoption.

Mistake 1: Over-Assessing

Because technology makes assessment easier, some educators dramatically increase assessment frequency without corresponding instructional benefit. Students experience assessment fatigue, and data volume overwhelms analysis capacity. The solution: assess strategically, not constantly. Every assessment should answer a specific question about student learning that will inform instructional decisions.

Mistake 2: Ignoring Accessibility

Digital assessments must accommodate diverse learners. Screen reader compatibility, extended time options, alternative input methods, and visual design considerations are not optional features. Before deploying any assessment technology, verify accessibility compliance and test with assistive technologies.

Mistake 3: Conflating Data with Understanding

Numbers and charts create an illusion of objectivity. However, assessment data requires interpretation through professional judgment. A student’s declining scores might indicate learning struggles, or they might reflect personal circumstances, testing anxiety, or technical difficulties. Data informs decisions; it does not make them.

Mistake 4: Neglecting Student Agency

The most powerful assessment systems involve students as partners. When learners understand assessment criteria, track their own progress, and set personal goals, motivation and achievement increase. Technology should enhance student agency, not reduce learners to passive subjects of measurement.

Self-Assessment: Your Assessment Practice Readiness

Before implementing new strategies, evaluate your current position. Rate yourself on each dimension:

Data Literacy: Can you interpret assessment reports, identify statistical patterns, and translate data into instructional decisions?

Technical Proficiency: Are you comfortable navigating digital platforms, troubleshooting common issues, and learning new tools independently?

Pedagogical Flexibility: Can you adjust instruction based on assessment evidence, even when it contradicts your planned sequence?

Student Communication: Do you regularly share assessment purposes and results with students in ways they understand and find motivating?

Continuous Improvement Orientation: Do you systematically evaluate and refine your assessment practices based on evidence of effectiveness?

Areas where you rate yourself lower represent priority development targets. The strategies in this article address all five dimensions, but focusing on your specific growth areas will yield the fastest results.

Frequently Asked Questions About Technology and Science for Teaching Assessment

How do I maintain assessment security with digital platforms?

Digital assessment security requires layered approaches. At the platform level, use features like question randomization, time limits, and browser lockdown modes when available. At the design level, create questions that require application rather than recall, making simple answer-sharing ineffective. At the cultural level, build classroom norms that value learning over grades. Research consistently shows that academic integrity improves when students perceive assessments as fair, relevant, and focused on growth rather than punishment. Consider implementing open-resource assessments that mirror real-world problem-solving, where the challenge lies in synthesis and application rather than memorization.

What is the appropriate balance between formative and summative assessment?

Research suggests a ratio of approximately four formative assessments for every summative assessment optimizes learning. However, this ratio matters less than the relationship between assessment types. Formative assessments should directly prepare students for summative demonstrations. If your summative assessment requires students to write analytical essays, your formative assessments should build component skills: thesis development, evidence selection, argument structure, and revision practices. Technology enables this alignment by allowing rapid iteration and immediate feedback on formative tasks, ensuring students enter summative assessments with confidence built through practice.

How can I use assessment technology with limited devices or internet access?

Resource constraints require creative solutions. Station rotation models allow small groups to access available devices while others engage in offline activities. Many assessment platforms offer offline modes that sync when connectivity returns. Paper-based assessments can still feed into digital analysis systems through scanning and optical character recognition. Consider “bring your own device” policies that leverage student smartphones for response systems. Most importantly, focus technology resources on assessments where digital tools provide the greatest advantage: adaptive practice, immediate feedback, and data aggregation. Reserve limited technology access for these high-impact applications.

How do I convince skeptical colleagues or administrators to support assessment technology adoption?

Start with evidence from your own practice. Implement technology-enhanced assessment in your classroom and document results: time savings, student performance changes, and qualitative feedback. Concrete local evidence persuades more effectively than external research. Invite observers to see the technology in action. Address concerns directly: demonstrate security features, show accessibility options, and explain how technology enhances rather than replaces professional judgment. Frame adoption as professional growth rather than criticism of current practices. Most resistance stems from fear of change or concern about competence. Providing support, training, and patience addresses these underlying concerns.

Conclusion: Your Assessment Transformation Begins Now

The integration of technology and science for teaching assessment represents one of the most significant opportunities for educational improvement in our generation. The strategies outlined in this article provide a roadmap for transformation, but knowledge without action produces no results.

Your three immediate action items:

- This week: Select one assessment from your upcoming instruction and convert it to a digital format. Focus on a low-stakes formative assessment to minimize risk while building familiarity. Analyze the data generated and identify one instructional adjustment based on results.

- This month: Implement a real-time response system in at least three lessons. Use the immediate feedback to adjust pacing and address misconceptions before they solidify. Track your own time investment and compare to traditional assessment approaches.

- This quarter: Establish a digital portfolio system for one class or subject area. Define artifact selection criteria, create reflection prompts, and schedule regular portfolio review conferences with students. Document the impact on student self-awareness and goal-setting.

The journey from traditional to technology-enhanced assessment is not instantaneous. It requires sustained effort, willingness to experiment, and tolerance for initial discomfort. However, educators who commit to this transformation consistently report that they cannot imagine returning to previous practices. The insights gained, time recovered, and student outcomes achieved make the investment worthwhile.

For comprehensive guidance on implementing these strategies and dozens more, Technology and Science for Teaching on Amazon provides the complete framework you need. This resource includes implementation templates, troubleshooting guides, and advanced strategies that build on the foundation established here. Your students deserve assessment practices that illuminate their learning and guide their growth. The tools exist. The research supports them. The only remaining variable is your decision to begin.